Creative Coding

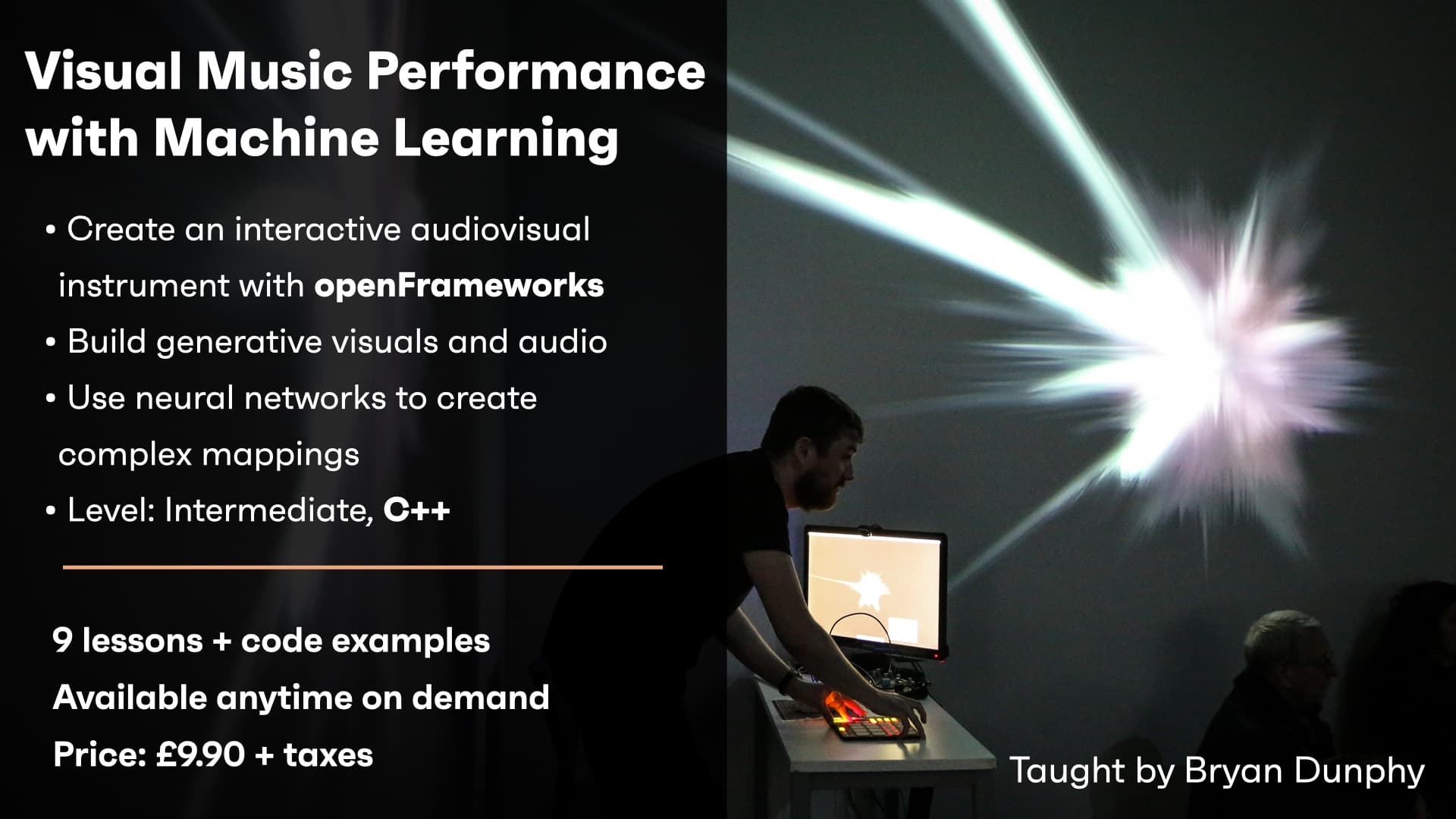

Visual Music Performance with Machine Learning - On demand

Course overview

Learn to build a real-time audiovisual instrument in openFrameworks.

Learning outcomes

Create procedural audio in openFrameworks using ofxMaxim

Discuss interactive machine learning techniques

Use a neural network to control audiovisual parameters simultaneously in real-time

Who is this course for?

- • In this workshop you will use openFrameworks to build a real-time audiovisual instrument. You will generate dynamic abstract visuals within openFrameworks and procedural audio using the ofxMaxim addon. You will then learn how to control the audiovisual material by mapping controller input to audio and visual parameters using the ofxRapid Lib add on.

Requirements

- • A computer and internet connection

- • A web cam and mic

- • A Zoom account

- • Installed version of openFrameworks

- • Downloaded addons ofxMaxim, ofxRapidLib

- • Access to MIDI/OSC controller (optional - mouse/trackpad will also suffice)

Course content

What you will learn in this course

1 resource, 3 lessons

+

What you will learn in this course

1 resource, 3 lessons

- Course Overview

- Requirements

- Pre-course preparation

- Work sheet with exercises

Visual Music Performance with Machine Learning - On demand

9 videos, 2 lessons

+

Visual Music Performance with Machine Learning - On demand

9 videos, 2 lessons

Part 1 - Sphere setup

Checking access...Part 2 - Phong lighting

Checking access...Part 3 - Camera + Normal matrix

Checking access...Part 4 - Vertex displacement

Checking access...Part 5 - ofxMaxim setup

Checking access...Part 6 - Simple FM synth

Checking access...Part 7 - Machine Learning - Data collection

Checking access...Part 8 - Machine Learning - Train + Run model

Checking access...Part 9 - OSC controller

Checking access...- Finished Project on Github

- Was this course the right level?

Instructors

Bryan Dunphy

Bryan Dunphy graduated in 2021 from a PhD at Goldsmiths University. He specialises in audio-visual, immersive performances and creations. Most of his work uses Machine Learning.